Turning vision tests into meaningful monitoring

-

OKKO Health

-

Researcher

UX designer

UI designer

-

End-to-end redesign of the results experience

OKKO Health needed to redesign its vision results experience to increase repeat engagement and ensure patients clearly understood the value of monitoring their eyesight at home.

While the product successfully measured vision changes for people living with AMD, user behaviour showed a significant drop-off after initial tests. The challenge was not clinical accuracy, but perception… Users did not always understand what their results meant or why continued testing was important.

The goal of this project was to reframe how results were communicated, reduce anxiety and misinterpretation, and position OKKO as a supportive monitoring companion rather than just a vision test.

Process

-

Usability testing with AMD patients

Behavioural data analysis (drop-off patterns)

Competitor analysis (health & habit-forming apps)

Emotional journey mapping

Thematic analysis (Attride-Stirling framework)

-

Clear problem statement (value perception vs feature gap)

“How might we increase repeat testing?” framing

Engagement and value success metrics

Tone and language guardrails

Results communication principles (“usual visual range”)

-

Multiple results visualisation concepts

Copy iterations to reduce anxiety and misinterpretation

Accessible UI system (WCAG-aligned)

“Building vision range” onboarding state

Interactive Figma prototype (end-to-end flow)

-

Moderated usability testing (value & comprehension focus)

Iterated results experience

Design framework for ongoing engagement

Project overview

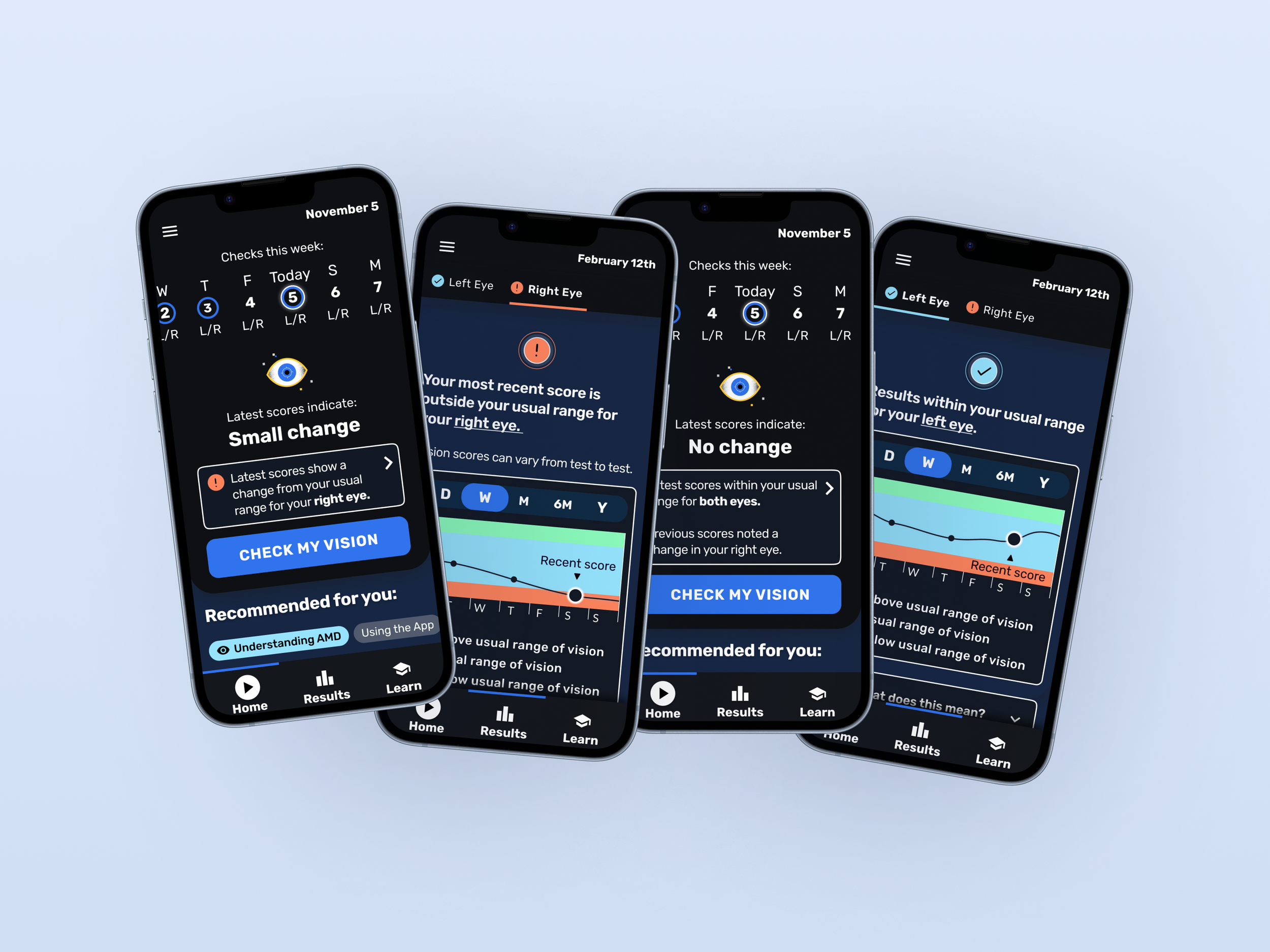

In redesigning the OKKO Health results experience, I addressed three key challenges to increase repeat usage and ensure patients felt genuine value from monitoring their vision at home:

Users did not clearly understand the value of their results

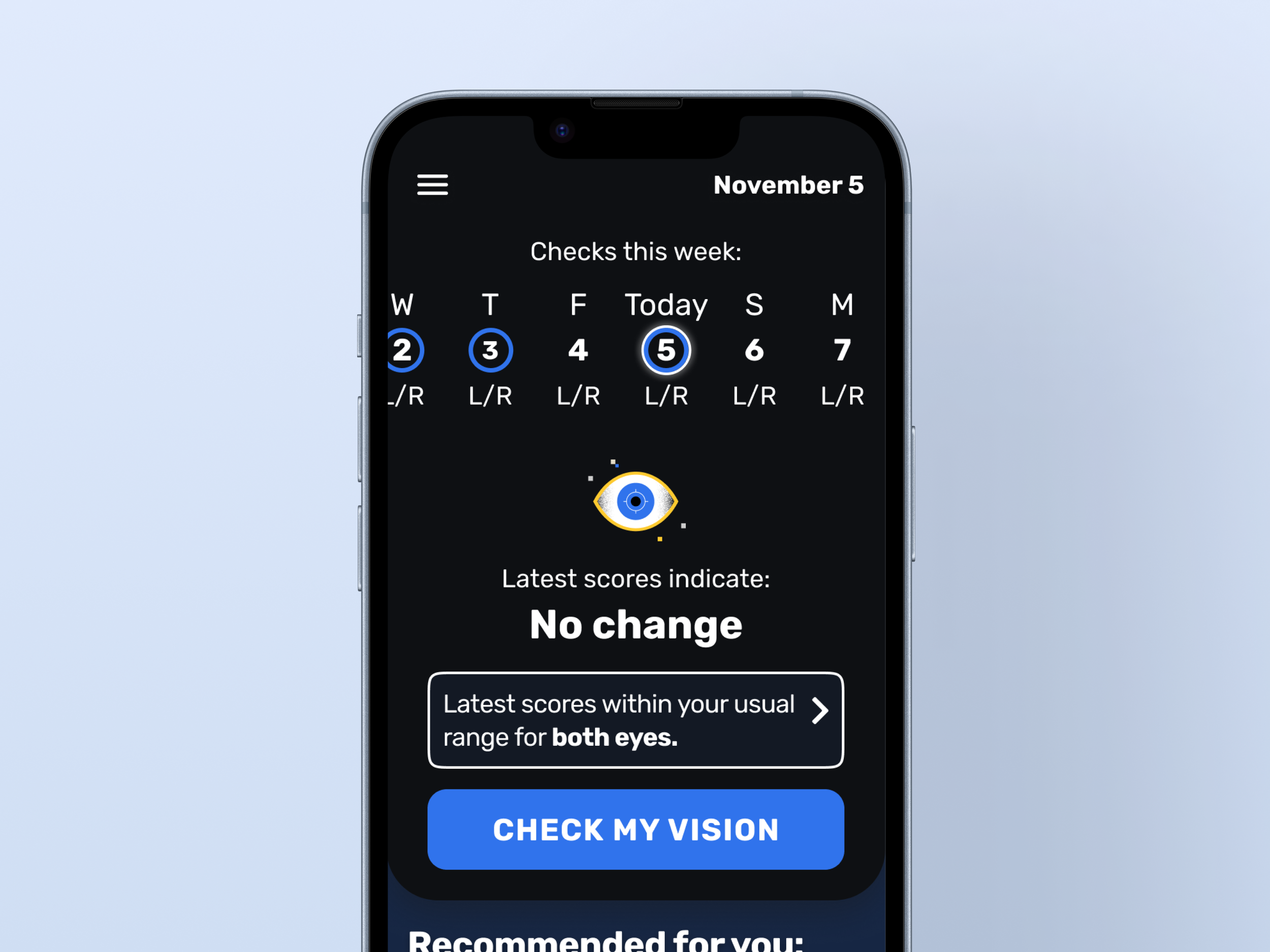

Data showed a significant drop-off after 1–2 tests. While the product was clinically sound, users were not consistently seeing why regular testing mattered.

I conducted moderated usability sessions with AMD patients, synthesised findings using thematic analysis, and mapped emotional journeys across the results experience. A recurring insight was that users interpreted single test results as definitive, rather than part of a pattern over time.

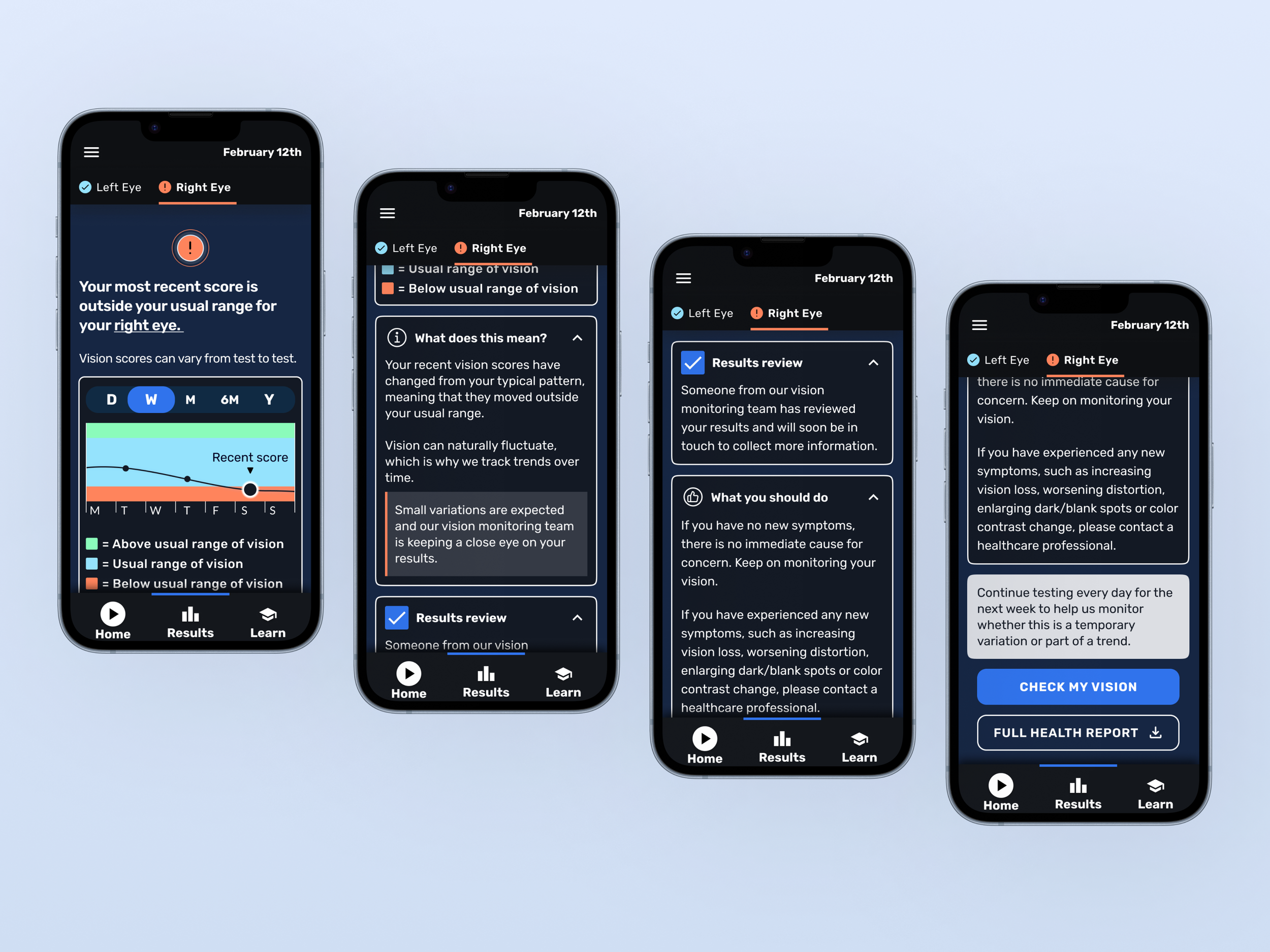

To address this, I reframed the results experience around the concept of a user’s “usual visual range.” Instead of focusing on raw scores or performance, the interface now communicates whether a result sits within or outside a person’s typical pattern. This shifted the narrative from performance to monitoring, reinforcing long-term value.

Results feedback risked causing anxiety or misinterpretation

Testing revealed that certain visual cues and language created unintended alarm, particularly when a result fell outside a typical range.

I established tone and language guardrails to ensure results were observational rather than diagnostic. Alarmist phrasing was removed, and messaging now clearly reflects that changes may be driven by a single recent score, encouraging repeat testing to confirm patterns.

I explored multiple visualisation approaches, including plain-language summaries, status indicators, and trend-based visuals. These were prototyped in Figma and tested with users to assess comprehension, reassurance, and perceived usefulness.

Accessibility was central throughout. Designs were reviewed against WCAG guidelines, colour usage was refined to avoid clinical red signals, and a new typeface was introduced to support larger, more legible interfaces for visually impaired users.

Users lacked a clear understanding of how monitoring works over time

Early-stage users did not fully grasp how their “normal” vision range was established, which reduced motivation to continue testing.

I designed a “Building your usual vision range” experience to clearly explain what happens during the initial phase of use. Progress indicators and simplified explanatory copy helped users understand that multiple tests are required to establish a baseline. This reframed repeated testing as meaningful participation rather than repetition.

The final designs include:

A results experience centred on “usual visual range” rather than raw scores

Separate left and right eye views to reflect AMD-specific needs

Clear review-status communication without implying urgency

Encouragement to repeat testing to confirm patterns

A structured onboarding/results state explaining how the monitoring model works

High-contrast, low-cognitive-load visual design tailored for visually impaired users

Impact

Established a clearer value narrative for long-term monitoring

Reduced risk of misinterpretation and alarm in results communication

Created a scalable design framework for future engagement experiments

Positioned OKKO as a supportive monitoring companion rather than a standalone testing tool